原创文章,转载请注明: 转载自慢慢的回味

本文链接地址: ACGAN与CGAN的区别

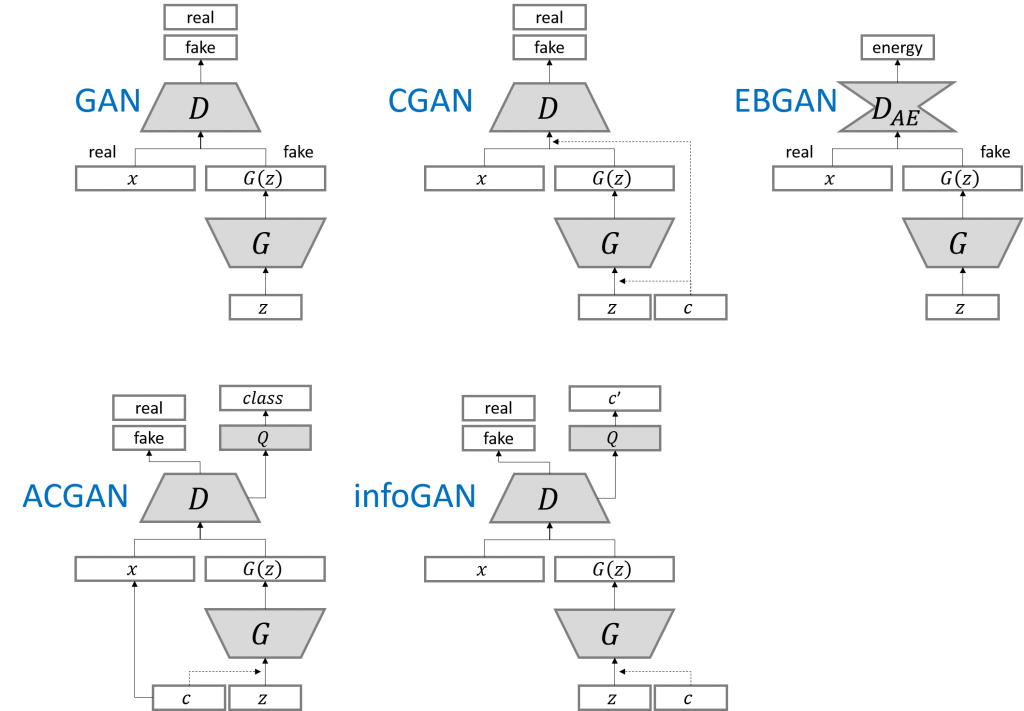

ACGAN与CGAN的区别如下

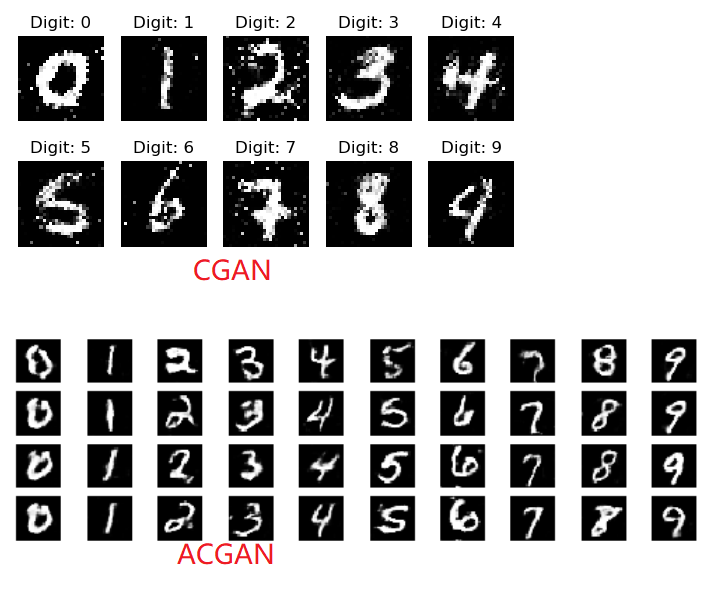

1 与CGAN一样的是,在生成网络的输入都混入label;

2 不一样的是在鉴别网络输入时,ACGAN不再混入label,而是在鉴别网络的输出时,把label作为target进行反馈来提交给鉴别网络的学习能力。

3 另一个不一样的是,生成网络和鉴别网络的网络层不再是CGAN的全连接,而是ACGAN的深层卷积网络(这是在DCGAN开始引入的改变),卷积能够更好的提取图片的特征值,所有ACGAN生成的图片边缘更具有连续性,感觉更真实。

如下生成网络model,和CGAN的一模一样。

noise = Input(shape=(self.latent_dim,)) label = Input(shape=(1,), dtype='int32') label_embedding = Flatten()(Embedding(self.num_classes, 100)(label)) model_input = multiply([noise, label_embedding]) img = model(model_input) |

如下鉴别网络model,传入还是img,但是输出包含两个部分:

1 validity,即鉴别图片是不是伪造的结果。

2 label,使用softmax激活,输出10维的结果即属于哪个数字。

img = Input(shape=self.img_shape) # Extract feature representation features = model(img) # Determine validity and label of the image validity = Dense(1, activation="sigmoid")(features) label = Dense(self.num_classes, activation="softmax")(features) return Model(img, [validity, label]) |

所以在鉴别网络或生成网络训练的时候,提供了target img_labels和sampled_labels。

# Train the discriminator d_loss_real = self.discriminator.train_on_batch(imgs, [valid, img_labels]) d_loss_fake = self.discriminator.train_on_batch(gen_imgs, [fake, sampled_labels]) d_loss = 0.5 * np.add(d_loss_real, d_loss_fake) # --------------------- # Train Generator # --------------------- # Train the generator g_loss = self.combined.train_on_batch([noise, sampled_labels], [valid, sampled_labels]) |

附基于Keras的测试程序

from __future__ import print_function, division from keras.datasets import mnist from keras.layers import Input, Dense, Reshape, Flatten, Dropout, multiply from keras.layers import BatchNormalization, Activation, Embedding, ZeroPadding2D from keras.layers.advanced_activations import LeakyReLU from keras.layers.convolutional import UpSampling2D, Conv2D from keras.models import Sequential, Model from keras.optimizers import Adam import matplotlib.pyplot as plt import numpy as np class ACGAN(): def __init__(self): # Input shape self.img_rows = 28 self.img_cols = 28 self.channels = 1 self.img_shape = (self.img_rows, self.img_cols, self.channels) self.num_classes = 10 self.latent_dim = 100 optimizer = Adam(0.0002, 0.5) losses = ['binary_crossentropy', 'sparse_categorical_crossentropy'] # Build and compile the discriminator self.discriminator = self.build_discriminator() self.discriminator.compile(loss=losses, optimizer=optimizer, metrics=['accuracy']) # Build the generator self.generator = self.build_generator() # The generator takes noise and the target label as input # and generates the corresponding digit of that label noise = Input(shape=(self.latent_dim,)) label = Input(shape=(1,)) img = self.generator([noise, label]) # For the combined model we will only train the generator self.discriminator.trainable = False # The discriminator takes generated image as input and determines validity # and the label of that image valid, target_label = self.discriminator(img) # The combined model (stacked generator and discriminator) # Trains the generator to fool the discriminator self.combined = Model([noise, label], [valid, target_label]) self.combined.compile(loss=losses, optimizer=optimizer) def build_generator(self): model = Sequential() model.add(Dense(128 * 7 * 7, activation="relu", input_dim=self.latent_dim)) model.add(Reshape((7, 7, 128))) model.add(BatchNormalization(momentum=0.8)) model.add(UpSampling2D()) model.add(Conv2D(128, kernel_size=3, padding="same")) model.add(Activation("relu")) model.add(BatchNormalization(momentum=0.8)) model.add(UpSampling2D()) model.add(Conv2D(64, kernel_size=3, padding="same")) model.add(Activation("relu")) model.add(BatchNormalization(momentum=0.8)) model.add(Conv2D(self.channels, kernel_size=3, padding='same')) model.add(Activation("tanh")) model.summary() noise = Input(shape=(self.latent_dim,)) label = Input(shape=(1,), dtype='int32') label_embedding = Flatten()(Embedding(self.num_classes, 100)(label)) model_input = multiply([noise, label_embedding]) img = model(model_input) return Model([noise, label], img) def build_discriminator(self): model = Sequential() model.add(Conv2D(16, kernel_size=3, strides=2, input_shape=self.img_shape, padding="same")) model.add(LeakyReLU(alpha=0.2)) model.add(Dropout(0.25)) model.add(Conv2D(32, kernel_size=3, strides=2, padding="same")) model.add(ZeroPadding2D(padding=((0,1),(0,1)))) model.add(LeakyReLU(alpha=0.2)) model.add(Dropout(0.25)) model.add(BatchNormalization(momentum=0.8)) model.add(Conv2D(64, kernel_size=3, strides=2, padding="same")) model.add(LeakyReLU(alpha=0.2)) model.add(Dropout(0.25)) model.add(BatchNormalization(momentum=0.8)) model.add(Conv2D(128, kernel_size=3, strides=1, padding="same")) model.add(LeakyReLU(alpha=0.2)) model.add(Dropout(0.25)) model.add(Flatten()) model.summary() img = Input(shape=self.img_shape) # Extract feature representation features = model(img) # Determine validity and label of the image validity = Dense(1, activation="sigmoid")(features) label = Dense(self.num_classes, activation="softmax")(features) return Model(img, [validity, label]) def train(self, epochs, batch_size=128, sample_interval=50): # Load the dataset (X_train, y_train), (_, _) = mnist.load_data() # Configure inputs X_train = (X_train.astype(np.float32) - 127.5) / 127.5 X_train = np.expand_dims(X_train, axis=3) y_train = y_train.reshape(-1, 1) # Adversarial ground truths valid = np.ones((batch_size, 1)) fake = np.zeros((batch_size, 1)) for epoch in range(epochs): # --------------------- # Train Discriminator # --------------------- # Select a random batch of images idx = np.random.randint(0, X_train.shape[0], batch_size) imgs = X_train[idx] # Sample noise as generator input noise = np.random.normal(0, 1, (batch_size, 100)) # The labels of the digits that the generator tries to create an # image representation of sampled_labels = np.random.randint(0, 10, (batch_size, 1)) # Generate a half batch of new images gen_imgs = self.generator.predict([noise, sampled_labels]) # Image labels. 0-9 img_labels = y_train[idx] # Train the discriminator d_loss_real = self.discriminator.train_on_batch(imgs, [valid, img_labels]) d_loss_fake = self.discriminator.train_on_batch(gen_imgs, [fake, sampled_labels]) d_loss = 0.5 * np.add(d_loss_real, d_loss_fake) # --------------------- # Train Generator # --------------------- # Train the generator g_loss = self.combined.train_on_batch([noise, sampled_labels], [valid, sampled_labels]) # Plot the progress print ("%d [D loss: %f, acc.: %.2f%%, op_acc: %.2f%%] [G loss: %f]" % (epoch, d_loss[0], 100*d_loss[3], 100*d_loss[4], g_loss[0])) # If at save interval => save generated image samples if epoch % sample_interval == 0: self.save_model() self.sample_images(epoch) def sample_images(self, epoch): r, c = 10, 10 noise = np.random.normal(0, 1, (r * c, 100)) sampled_labels = np.array([num for _ in range(r) for num in range(c)]) gen_imgs = self.generator.predict([noise, sampled_labels]) # Rescale images 0 - 1 gen_imgs = 0.5 * gen_imgs + 0.5 fig, axs = plt.subplots(r, c) cnt = 0 for i in range(r): for j in range(c): axs[i,j].imshow(gen_imgs[cnt,:,:,0], cmap='gray') axs[i,j].axis('off') cnt += 1 fig.savefig("images/%d.png" % epoch) plt.close() def save_model(self): def save(model, model_name): model_path = "saved_model/%s.json" % model_name weights_path = "saved_model/%s_weights.hdf5" % model_name options = {"file_arch": model_path, "file_weight": weights_path} json_string = model.to_json() open(options['file_arch'], 'w').write(json_string) model.save_weights(options['file_weight']) save(self.generator, "generator") save(self.discriminator, "discriminator") if __name__ == '__main__': acgan = ACGAN() acgan.train(epochs=14000, batch_size=32, sample_interval=200) |

本作品采用知识共享署名 4.0 国际许可协议进行许可。